Broadcom’s AI Ascent: Inside the Quarter That Changed Everything

How Broadcom turned a quiet “beat and raise” quarter into a defining statement about the future of custom AI silicon — and why Wall Street is finally paying attention.

Broadcom’s first-quarter earnings for fiscal 2026 arrived with the quiet confidence of a company that knows exactly where it is going.

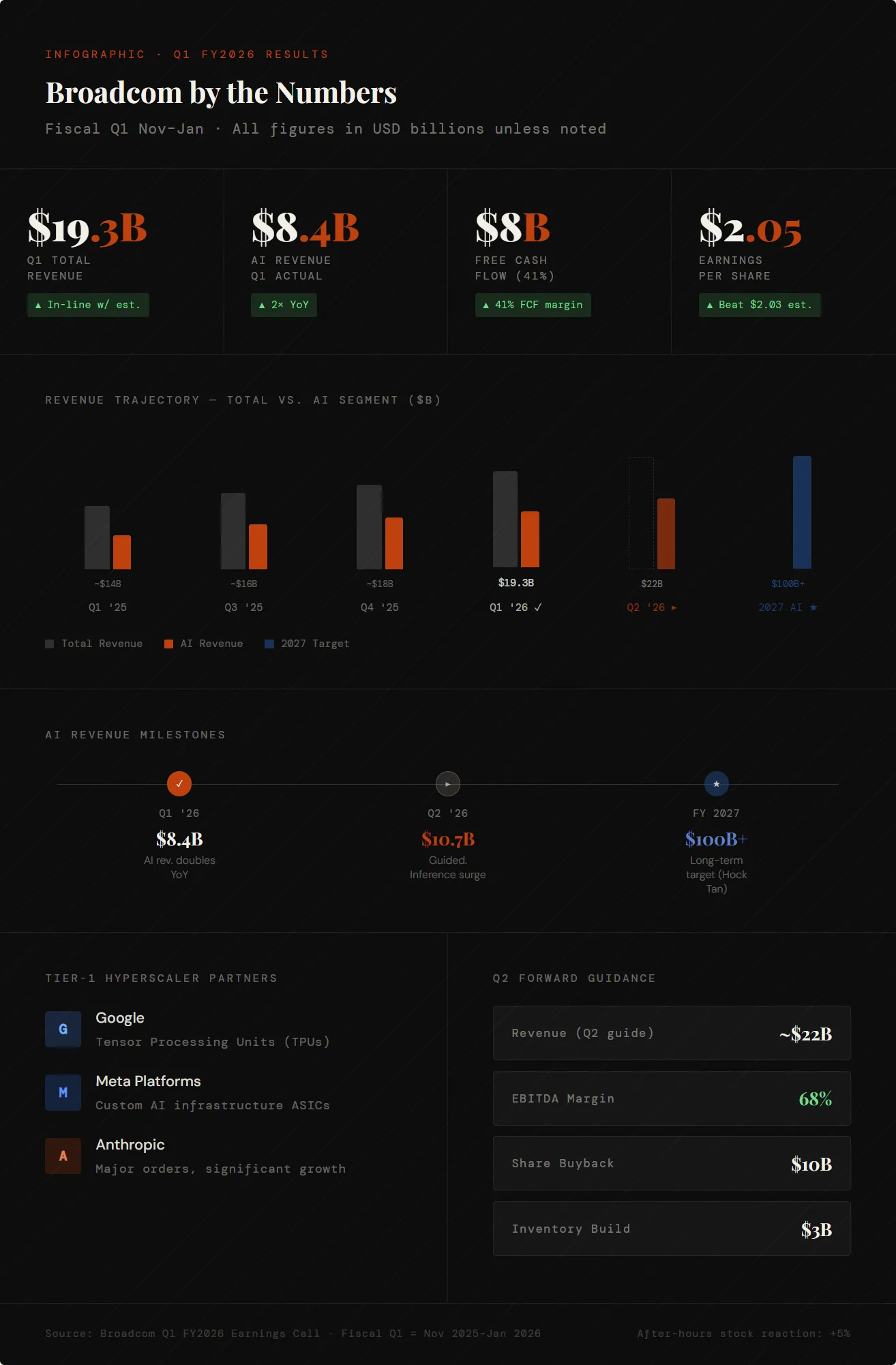

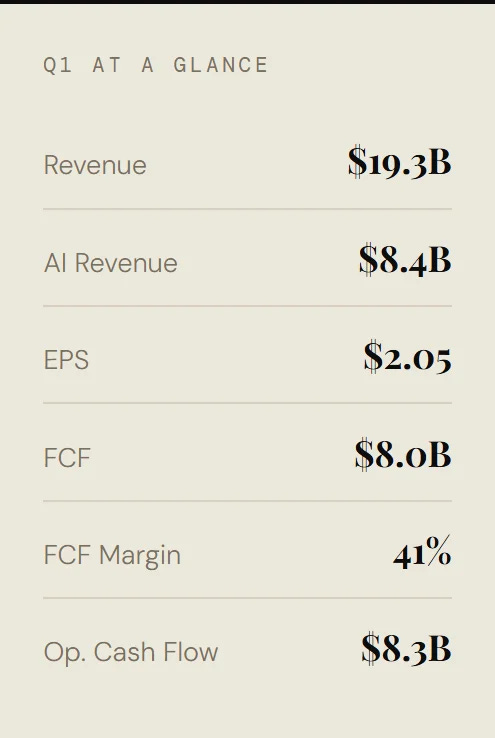

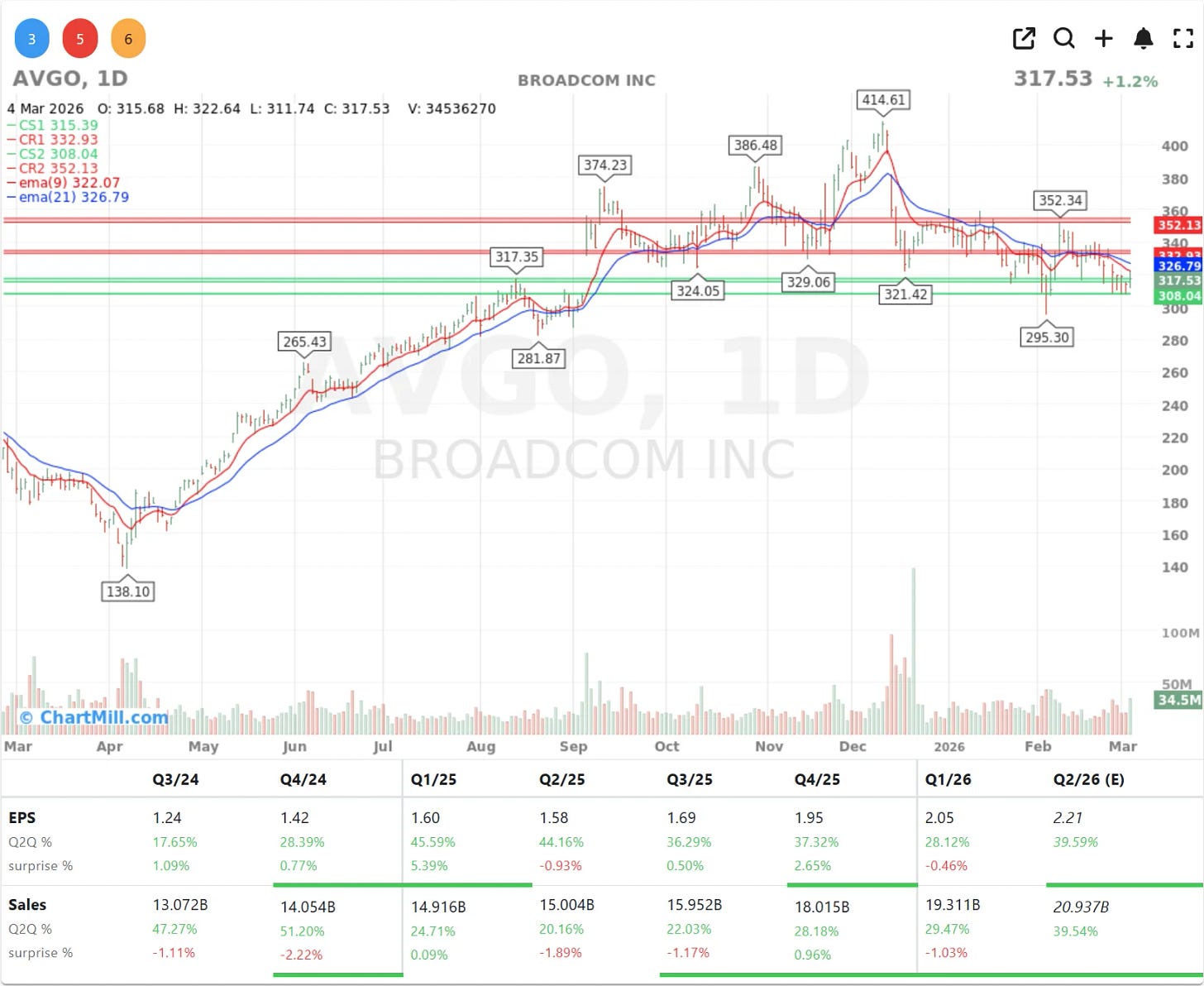

Revenue of $19.3 billion landed precisely in line with analyst estimates, a “beat and raise” quarter that, on the surface, looked orderly. But scratch beneath those headline numbers and a more consequential story emerges: a legacy chipmaker has completed one of the most deliberate strategic transformations in modern semiconductor history.

Artificial intelligence now accounts for $8.4 billion of that quarterly total, a figure that has doubled year-over-year and shows no sign of plateauing.

For context, that single product segment would rank among the largest revenue lines at most Fortune 500 technology companies. Broadcom is no longer a chip supplier that happens to serve AI customers. It is an AI infrastructure company that also makes other chips.

“General-purpose GPUs are not sufficient on their own for hyperscalers who want to optimize their data centers.” Hock Tan, CEO, Broadcom

The ASIC Advantage

The central thesis of CEO Hock Tan’s analyst call commentary was a pointed critique of the dominant GPU narrative. While Nvidia’s general-purpose graphics processors have powered the first wave of AI investment, Tan argued that they represent an inefficient long-term solution for the world’s largest data center operators.

Application-Specific Integrated Circuits - ASICs - are chips engineered for precisely defined AI workloads, and they execute those tasks faster and more cheaply than a broad-market GPU ever could.

This is not a theoretical advantage. Google’s Tensor Processing Units, designed in close collaboration with Broadcom, exemplify the approach: purpose-built silicon optimized for the specific mathematical operations that power large language models and neural networks. Meta and Anthropic, both named explicitly by management as key customers, are placing significant orders for similar custom silicon.

The competitive moat here is formidable. As Hock Tan noted during the call, designing an ASIC in a laboratory is one thing, producing it reliably at the scale required by a hyperscaler is another discipline entirely. Broadcom’s manufacturing execution capability, refined over years of serving demanding enterprise clients, is not easily replicated.

From Training to Inference: A Market Inflection

Perhaps the most strategically significant insight from the analyst call was a shift in the nature of AI demand itself. The initial boom in AI chip spending was overwhelmingly driven by training, the computationally intensive process of building large models from scratch. That phase attracted enormous capital and put Nvidia’s H100 and A100 GPUs on months-long waitlists.

Broadcom is now seeing a surge in demand for inference, the deployment phase, where a trained model actually answers questions, generates content, or makes decisions in production environments. This transition matters enormously. Inference workloads run continuously, at massive scale, across millions of end-user interactions every day.

The economics of inference favor custom, efficient silicon over expensive general-purpose GPUs. Broadcom’s ASIC business is positioned at exactly this inflection point.

The move from training to inference suggests the AI industry is exiting its experimental phase and entering mass-market deployment. That is a structural tailwind that does not diminish easily.

Cash Generation and Capital Confidence

Broadcom’s financial profile is as remarkable as its strategic positioning. In Q1, the company generated $8.3 billion in operating cash flow, translating to $8 billion in free cash flow after capital expenditures, a free cash flow margin of 41%. These are extraordinary numbers in any industry. In semiconductors, they are exceptional.

Management’s response to this cash generation was equally telling: a $10 billion share buyback program, signaling strong internal confidence in the company’s trajectory. A buyback of this size is not a defensive move. It is a statement that the current market valuation still underestimates Broadcom’s long-term earnings power.

The Road to $100 Billion

Guidance for the second quarter was the immediate catalyst for the stock’s after-hours move of 5%. Revenue is expected to reach $22 billion, meaningfully ahead of the $20.5 billion consensus, with AI-specific revenue climbing to $10.7 billion and EBITDA margins holding at a robust 68%.

But the longer arc is more audacious. Hock Tan suggested that AI-related revenue could exceed $100 billion by 2027. Given that the company is currently running at roughly $40 billion in annualized AI revenue and accelerating, the target is aggressive but not implausible. It would require continued share gains, sustained hyperscaler investment, and successful execution on the inference opportunity, all areas where Broadcom’s current trajectory is encouraging.

CFO Kirsten Spears added operational color to the growth narrative, noting that inventory levels have been deliberately built to $3 billion (rising from 58 to 68 days) to ensure supply can meet the accelerating demand. Visibility into future orders has improved significantly, reducing execution risk.

The Verdict

Broadcom’s Q1 2026 results are best understood not as a single quarter but as a proof point in an ongoing strategic metamorphosis. The company has successfully positioned itself as the indispensable infrastructure partner for the hyperscalers building the AI-powered internet and it has done so with a differentiated technical approach, a formidable manufacturing moat, and financial metrics that most technology companies can only aspire to.

The initial flat reaction from investors gave way, correctly, to a more considered verdict once the analyst call details emerged. At its core, Broadcom is telling a story about structural demand, custom silicon superiority, and the transition to mass-market AI deployment. It is a compelling story and the numbers are backing it up.

I appreciate the “Verdict” section you added in this piece.